Domain Controller Integration Challenges No One Should Ignore

As vehicles evolve into software-defined platforms, domain controller integration is becoming a decisive factor in system performance, safety, and scalability. For technical evaluators, overlooking hidden challenges—from cross-domain communication and thermal constraints to architecture compatibility and supplier coordination—can lead to costly delays and reliability risks. Understanding these issues early is essential to building smarter, more resilient automotive electronics strategies.

For technical evaluation teams, the real question is not whether a domain controller can consolidate functions on paper. It is whether the integration path can survive real vehicle constraints: mixed legacy networks, thermal peaks, timing conflicts, cybersecurity exposure, software update complexity, and multi-supplier execution risk. In practice, most failed or delayed programs do not fail because the concept is wrong. They fail because early assessments underestimate integration friction.

The core search intent behind domain controller in this context is highly practical. Readers want to identify the non-obvious integration problems that affect platform feasibility, sourcing decisions, validation effort, and future scalability. They are looking for decision-grade insight: what can go wrong, how to detect it early, and which technical signals separate a robust architecture from a risky one.

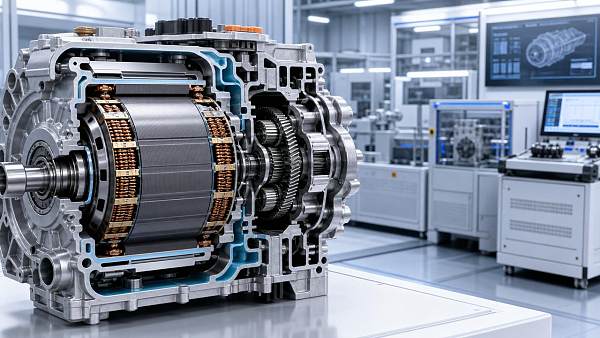

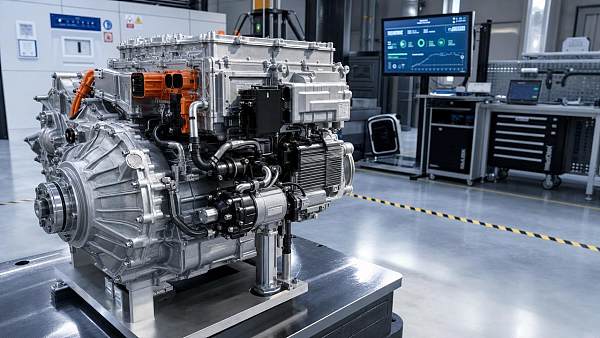

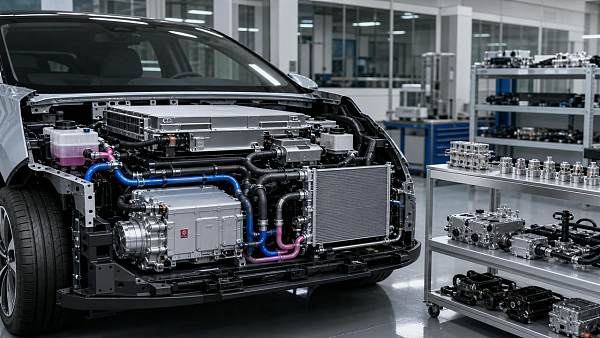

For automotive electronics programs spanning smart cockpit, thermal management, steering, body control, and high-speed communication, the value of a domain controller lies in centralization, reduced ECU count, software reuse, and better compute allocation. But integration is rarely a simple “replace many boxes with one box” exercise. It is a system redesign that reaches wiring harness architecture, power distribution, cooling strategy, middleware, diagnostics, and organization structure.

Why domain controller integration becomes risky faster than many evaluations assume

Many technical assessments begin with expected benefits: lower hardware count, reduced weight, consolidated software, and easier over-the-air upgrade management. These benefits are real. However, they are often modeled in isolation, while actual integration depends on how many timing-critical, safety-related, and comfort-related functions are being collapsed into one computational zone.

In modern vehicle platforms, a domain controller may sit at the center of smart cabin electronics, body functions, ADAS-related data exchange, or thermal coordination logic. This means it becomes a convergence point for different engineering cultures, different software maturity levels, and different communication expectations. Once this convergence starts, hidden constraints emerge quickly.

The most common evaluation error is to treat integration as a hardware selection problem. In reality, it is an architecture compatibility problem. Compute performance, memory size, and interface count matter, but they do not by themselves answer whether the controller can coordinate multiple domains without introducing latency, thermal instability, diagnostic blind spots, or validation overload.

Technical evaluators should assume from the beginning that any domain controller project carries four classes of risk: architecture mismatch, execution complexity, lifecycle maintainability, and cross-supplier responsibility gaps. If those four dimensions are not assessed together, early business cases often look cleaner than the actual deployment path.

Are cross-domain communication demands already beyond what the architecture can safely handle?

One of the biggest reasons domain controller programs run into trouble is underestimating communication complexity. Consolidation increases the volume, variety, and urgency of data exchanged inside the vehicle. A controller that must simultaneously manage infotainment coordination, body electronics, thermal requests, gateway logic, and safety-relevant signals faces far more than raw bandwidth pressure.

Latency determinism becomes a major issue. It is not enough for messages to arrive; they must arrive within predictable timing windows under varying processor loads. In mixed-domain vehicles, some functions tolerate delay, such as user interface transitions, while others do not, such as steering-related status propagation, battery cooling requests, or fault response coordination. When these coexist on a shared platform, timing conflicts become a serious integration hazard.

Another challenge is protocol coexistence. Many vehicle programs still combine CAN, LIN, Automotive Ethernet, FlexRay in legacy cases, and proprietary supplier interfaces. A domain controller that looks capable at the interface level may still struggle when real network translation, gateway prioritization, and synchronized diagnostics are added. Evaluators should ask whether the architecture was designed for protocol convergence or merely equipped to connect to multiple buses.

Data ownership also matters. As signals move from dedicated ECUs into centralized compute platforms, questions arise about where truth is maintained, how arbitration works when requests conflict, and which subsystem has authority during degraded operation. These issues become critical when thermal management, cabin functions, and body controls interact. Without a clear signal governance model, software integration slows and fault tracing becomes expensive.

The practical test is simple: can the proposed domain controller architecture maintain deterministic communication and fault isolation under peak network load, degraded bus conditions, and concurrent high-priority events? If suppliers cannot demonstrate that with credible validation evidence, the integration risk is higher than presentations suggest.

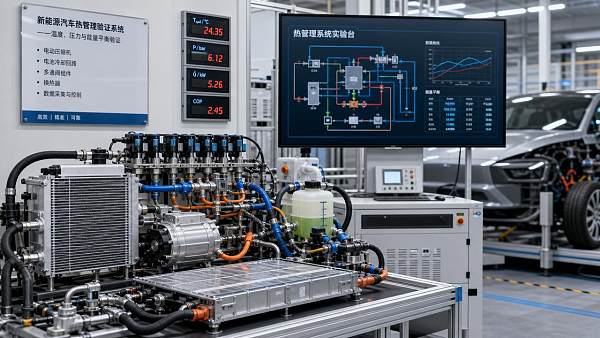

Can the thermal and power design support real operating conditions, not just benchmark scenarios?

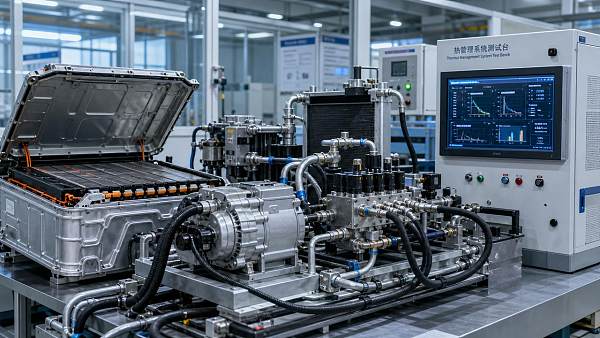

In automotive electronics, compute centralization always pushes heat and power density upward. This is especially relevant for a domain controller that coordinates display-intensive smart cabins, connectivity, voice interaction, camera processing, and comfort-related control strategies. Thermal design is not a side topic. It directly affects reliability, performance throttling, package size, and placement feasibility.

Technical evaluators should examine the controller in the context of the full vehicle thermal environment. Cabin temperature extremes, solar load, limited airflow behind the instrument panel, cold-start conditions, vibration, and adjacent heat sources all influence controller behavior. A design that performs well on a bench may show instability when integrated into a tightly packaged cockpit or body zone.

Power integrity is equally important. Domain controllers often aggregate loads that were once distributed. This can amplify transient response issues, startup sequencing complexity, electromagnetic interference sensitivity, and fault propagation risk. If the power architecture is not carefully designed, one disturbance can affect multiple formerly independent functions at once.

For readers in sectors close to thermal systems, this point should be viewed strategically. The future of centralized automotive electronics is linked to thermal management quality. As compute and control nodes become more integrated, interactions between controller placement, HVAC ducting, passive dissipation, liquid-assisted concepts in advanced platforms, and system energy use become more consequential. Thermal architecture and electronics architecture can no longer be reviewed separately.

Ask suppliers for worst-case thermal maps, derating logic, sustained-load behavior, and environmental margin strategy. If they only provide typical-case figures, the assessment is incomplete. A domain controller is only as scalable as its ability to remain stable across real duty cycles.

Does the software stack support integration, or does it hide long-term maintenance risk?

For most technical evaluators, software is where domain controller integration shifts from promising to uncertain. Hardware consolidation may be visible, but the software burden expands rapidly. Different functions often come with different code bases, operating assumptions, safety levels, update cycles, and supplier ownership models. Simply placing them on one controller does not make them software-compatible.

Middleware maturity is one of the first things to inspect. Can the stack support service-oriented communication, containerized or partitioned workloads where needed, secure boot, safe state management, and consistent diagnostics? If not, the controller may function during demonstration phases but become difficult to scale across variants and model years.

Partitioning strategy is another major issue. Centralized compute creates efficiency, but it also increases the consequence of software interference. A memory leak, scheduler conflict, or poorly isolated update process can affect multiple vehicle functions. Evaluators should look for strong separation between safety-relevant and non-safety workloads, as well as a clear fallback strategy if one software domain fails.

OTA readiness deserves scrutiny too. Many domain controller programs advertise update flexibility, but true maintainability requires version control discipline, rollback mechanisms, cybersecurity validation, dependency mapping, and post-update diagnostic visibility. If update governance is immature, the benefits of centralization can turn into field quality risk.

Software toolchain compatibility across suppliers is often underestimated. Integration slows when teams use different assumptions for model-based development, AUTOSAR interpretation, cybersecurity evidence, logging standards, or test automation coverage. These mismatches rarely appear in early architecture slides, but they significantly affect launch readiness.

A reliable domain controller strategy therefore needs more than compute capacity. It needs a software integration model that remains supportable across suppliers, vehicle derivatives, and continuous update cycles. For evaluators, this is often the true pass-or-fail criterion.

How much legacy compatibility is enough before centralization starts hurting program efficiency?

Most vehicle manufacturers and Tier 1 suppliers cannot redesign everything at once. As a result, domain controller integration often happens in a hybrid environment where legacy ECUs, existing harness topology, prior-generation sensors, and established supplier modules must remain in the system. This is where architecture ambition meets practical limitation.

Backward compatibility is useful, but it has a cost. Every retained legacy interface can add software adaptation work, gateway burden, diagnostic exceptions, and validation permutations. At some point, preserving old structures reduces the value of centralization. Evaluators need to know where that point is for their platform.

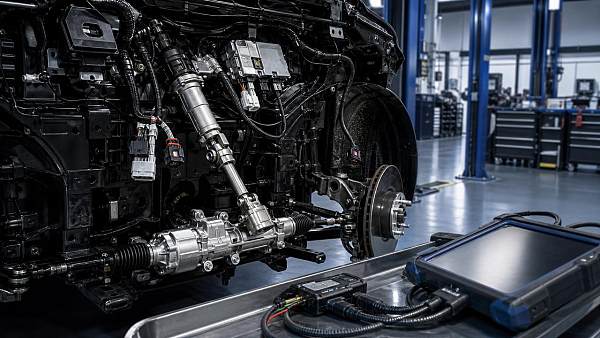

Wiring harness implications are particularly important. A domain controller may reduce ECU count, yet still increase harness complexity if zonal boundaries, signal routing, shielding, power lines, and serviceability were not redesigned accordingly. In some cases, projected mass or cost savings disappear because the electrical architecture remains halfway between distributed and centralized logic.

This is why technical assessment should include architecture fit, not only component fit. If the surrounding E/E platform is not ready for meaningful controller centralization, adopting a powerful domain controller may simply create an expensive translation layer between old and new design philosophies.

A good evaluation asks: what percentage of the vehicle architecture is truly prepared to benefit from controller consolidation? If the answer is low, phased integration with stricter scope control may be better than aggressive centralization.

What hidden validation burden comes with a domain controller decision?

A domain controller usually reduces the number of discrete hardware boxes, but it rarely reduces validation effort in a straight line. In fact, it often increases system-level test complexity because more functions, states, and failure modes are now coupled. This is one of the most overlooked realities in sourcing and platform planning.

Traditional ECU validation can remain relatively contained. With a domain controller, validation becomes combinational. Teams must test not only each function, but interactions among functions during nominal operation, degraded modes, startup and shutdown transitions, cybersecurity events, thermal stress, and software update scenarios. The state-space grows quickly.

Fault isolation becomes harder as well. In distributed systems, a malfunction may be tied to a specific ECU. In centralized systems, the root cause may involve shared compute resources, middleware services, communication arbitration, power conditions, or thermal throttling. Without strong observability and logging design, troubleshooting time increases.

For technical evaluators, this means test strategy should be reviewed before supplier nomination is finalized. Ask whether the validation plan includes hardware-in-the-loop, software-in-the-loop, network fault injection, thermal stress correlation, and degraded service testing at realistic system loads. If those capabilities are missing, timing and cost estimates are likely optimistic.

Another critical point is homologation and safety evidence. If the domain controller hosts mixed criticality functions, the burden of demonstrating compliance, traceability, and controlled interaction becomes more demanding. Validation is no longer just about feature correctness. It is about architectural proof.

Are supplier roles and integration ownership clearly defined?

Many domain controller challenges are organizational before they become technical. Because the controller sits across multiple functional domains, responsibility often becomes blurred. One supplier may provide hardware, another BSP or middleware, several others application software, and the OEM may own integration logic or cybersecurity policy. Without a defined ownership model, issues remain unresolved too long.

Technical evaluators should pay close attention to who owns system integration authority. If every supplier is only responsible for its own deliverable, cross-domain defects may fall into a gap. This is especially dangerous when thermal control requests, cockpit logic, gateway services, and body functions share common resources on the same controller.

Interface governance is another weak point. API definitions, signal timing expectations, diagnostic semantics, and fault-handling rules must be controlled centrally. If they are negotiated late or changed informally, integration instability follows. A technically strong controller cannot compensate for weak governance.

The best sourcing decisions therefore consider not only the maturity of the domain controller product, but the maturity of the supplier ecosystem around it. Can the involved parties support joint debugging, synchronized releases, cybersecurity response, and field issue closure? If not, even a sound architecture may struggle in production programs.

What should technical evaluators check before approving a domain controller path?

A strong evaluation framework should be structured around evidence, not ambition. First, verify architecture fit: network topology, harness implications, power design, thermal envelope, and safety partitioning. Second, assess software sustainability: middleware maturity, update strategy, diagnostics, and cross-supplier toolchain compatibility. Third, review execution readiness: validation coverage, observability, and integration ownership.

It is also useful to score the proposal against future scalability. Can the domain controller support additional display functions, new thermal coordination logic, higher Ethernet traffic, or more advanced HMI workloads without major redesign? If scaling requires extensive rework, the platform may not be as future-ready as claimed.

For programs tied to electrification and intelligent cabins, evaluators should also study how the controller interacts with adjacent high-value systems such as HVAC controls, compressor strategies, battery thermal coordination, steering-related human-machine interfaces, and high-speed data paths. The closer the controller is to cross-functional orchestration, the more important system-level integration maturity becomes.

Ultimately, the best domain controller decisions come from teams that evaluate the controller as part of a living vehicle architecture, not as an isolated electronic module. That perspective reveals the hidden costs and the real strategic value.

Conclusion: the biggest domain controller risk is underestimating integration reality

Domain controller adoption is a necessary step in the transition toward software-defined vehicles, centralized compute, and more intelligent control of cabin, body, and thermal-related functions. But the main challenge is not conceptual. It is executional. Cross-domain communication, thermal and power limits, software maintainability, legacy compatibility, validation burden, and supplier coordination all shape whether centralization delivers its promise.

For technical evaluators, the smartest approach is to move beyond feature lists and compute specifications. A credible domain controller strategy must show deterministic communication, robust thermal behavior, maintainable software architecture, manageable validation scope, and clear integration ownership. If one of those pillars is weak, program risk rises quickly.

In other words, the domain controller that looks most advanced is not always the best choice. The best choice is the one whose integration path is technically realistic, operationally supportable, and scalable across the vehicle roadmap. That is the standard no serious evaluator should ignore.

Related News

- 00

0000-00

How Vehicle Reliability Is Changing in the EV Era - 00

0000-00

Automotive Supply Chain Risks That Still Catch Programs Off Guard - 00

0000-00

Smart Mobility Trends Worth Watching Through 2026 - 00

0000-00

Defrosting Algorithms: What Improves Visibility in Real Winter Driving - 00

0000-00

Are High-Voltage Flat-Wire Motors Worth the Design Trade-Offs?

Weekly Insights

Stay ahead with our curated technology reports delivered every Monday.

Recommended News