Autonomous Driving Data: What Makes It Useful Beyond Volume

Autonomous driving data is valuable not simply because of its scale, but because of its quality, context, and usability across real vehicle systems. For information researchers tracking intelligent mobility, understanding what makes data actionable is essential to evaluating safety, performance, and commercialization. This article explores the factors that turn raw autonomous driving data into strategic insight for the evolving automotive industry.

Why is autonomous driving data attracting so much attention beyond sheer dataset size?

The market often talks about autonomous driving data in terms of millions of miles, petabytes of sensor logs, or fleet-wide collection capacity. Those numbers matter, but volume alone does not explain whether the data can improve vehicle intelligence. For researchers, suppliers, and strategy teams, the more important question is whether a dataset helps decision-making in perception, control, validation, and product deployment.

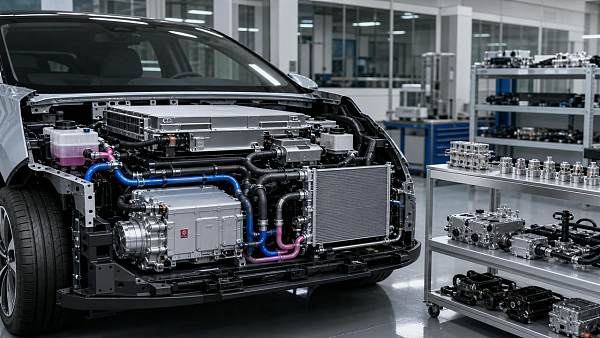

Useful autonomous driving data connects digital observations with real automotive behavior. A camera frame has more value when it is synchronized with steering input, braking response, wiring harness signal transmission, thermal conditions, and domain controller decisions. In other words, data becomes useful when it can explain what happened, why it happened, and how the system should respond next time.

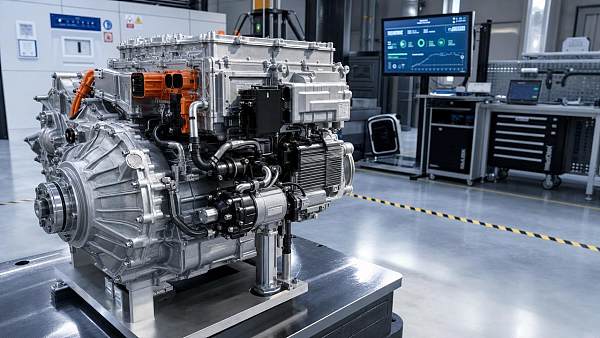

This is especially important in a supply chain shaped by electrification and intelligent mobility. High-level driving assistance does not depend on software in isolation. It depends on sensor integrity, low-latency electrical architecture, thermal stability of compute units, reliable steering redundancy, and consistent human-machine interaction. That is why autonomous driving data is increasingly evaluated not just as a digital asset, but as a system-level resource.

What actually makes autonomous driving data useful?

A practical way to evaluate autonomous driving data is to ask whether it supports learning, validation, and traceable engineering decisions. Five characteristics usually determine usefulness more than raw scale.

- Relevance: The data must match the target use case, such as urban intersections, highway lane changes, parking, night driving, or adverse weather.

- Quality: Signals should be clean, time-synchronized, correctly labeled, and free from critical corruption or ambiguity.

- Diversity: The dataset should cover rare but safety-critical situations, not only routine and easy scenarios.

- Context: Environmental, vehicle-state, thermal, and driver-interaction metadata must accompany the raw sensor stream.

- Usability: Engineers should be able to retrieve, process, compare, and audit the data efficiently across teams.

For example, a massive archive of daytime highway footage may still be weak if it lacks edge cases such as glare, worn lane markings, construction zones, emergency vehicle encounters, or steering fallback events. By contrast, a smaller but well-structured dataset with strong annotations, balanced scenarios, and clear links to vehicle actuation can deliver more value to model training and safety review.

From the perspective of portals like GACT, usefulness also depends on whether autonomous driving data reflects interactions across key subsystems. Wiring harness performance influences signal fidelity and response timing. Electric power steering and steer-by-wire architectures determine how command outputs become controlled motion. Thermal management affects compute reliability, sensor operating range, and power efficiency. Data that captures these relationships is far more useful than isolated perception records.

How can information researchers judge data quality without being model developers?

Information researchers do not always need to inspect neural network pipelines directly. Instead, they can evaluate autonomous driving data through practical indicators that reveal engineering maturity and commercial readiness.

Researchers should also ask whether the data is representative of intended deployment markets. Autonomous driving data collected in one geography may not translate cleanly to another because of different lane standards, signage, driving culture, weather patterns, or infrastructure quality. A dataset can appear large and impressive while still being commercially narrow.

Another useful signal is the company’s process for closed-loop improvement. When organizations can show how field events become curated data, how that data feeds simulation and validation, and how updated findings affect hardware and software requirements, they usually have a stronger command of real-world intelligence development.

Which types of context make autonomous driving data more actionable in real vehicles?

Context is often the difference between data that is merely stored and data that can drive engineering action. In autonomous systems, the same road scene can lead to very different outcomes depending on the vehicle state and subsystem conditions surrounding it.

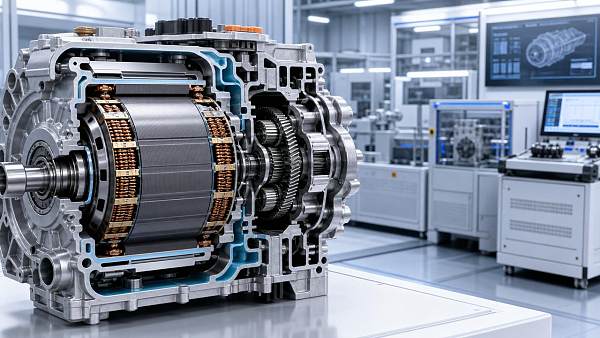

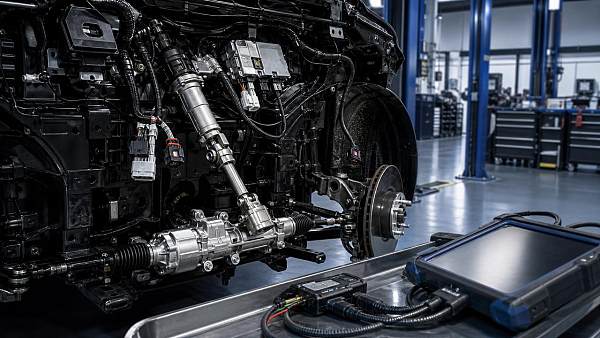

First, vehicle control context matters. Steering torque, brake pressure, motor output, and chassis response help engineers understand whether the system perceived correctly and acted appropriately. For advanced driver assistance and future autonomous platforms, electric power steering data is especially important because it connects digital planning with physical motion execution.

Second, electrical architecture context matters. Signal delay, communication stability, and high-voltage distribution can influence sensor fusion and controller response. As automotive wiring harnesses become more central to high-speed data transmission, autonomous driving data should not be separated from the hardware pathways that carry it.

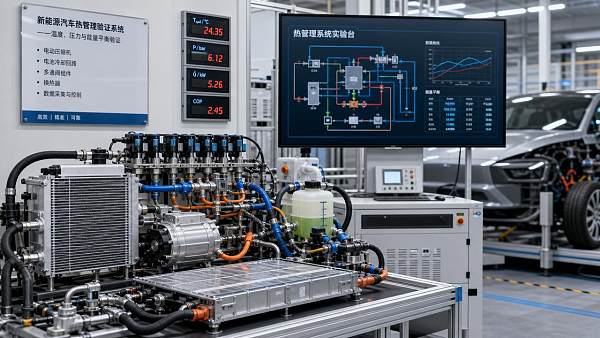

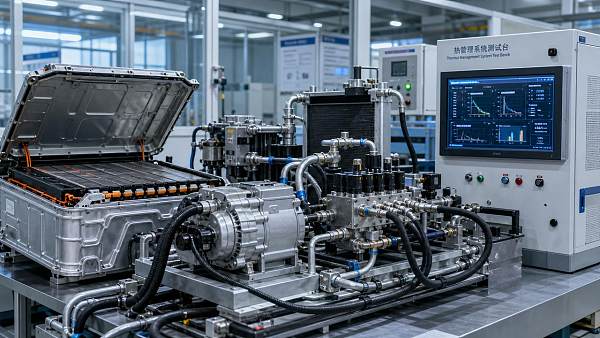

Third, thermal context matters more than many non-specialists expect. Compute platforms, cameras, radar modules, and cabin electronics all perform differently under heat stress or cold start conditions. In new energy vehicles, thermal management systems affect not only occupant comfort but also electronic stability, battery efficiency, and processing reliability. Autonomous driving data linked with temperature behavior can reveal failures or degradations that would remain invisible in ideal lab environments.

Fourth, human-machine interaction context matters. In-vehicle infotainment systems, alerts, displays, and takeover prompts shape how drivers respond during assisted or semi-automated driving. Data that captures warning timing, interface state, and driver intervention helps researchers assess whether the system is understandable as well as technically capable.

What are the most common mistakes when people evaluate autonomous driving data?

One common mistake is assuming more collection automatically means better intelligence. In reality, duplicate routine driving can inflate storage but add little learning value. If the dataset is dominated by easy cases, the system may still fail in rare but critical events.

A second mistake is focusing only on perception inputs while ignoring downstream execution. Autonomous driving data should show whether the vehicle could act safely through steering, braking, and power management, not just whether it identified an object on screen.

A third mistake is neglecting lifecycle usability. Some organizations gather vast data lakes that are hard to search, expensive to label, and disconnected from test cases. Data loses strategic value when engineers cannot locate comparable events, reproduce failures, or trace updates across versions.

A fourth mistake is underestimating hardware dependencies. If a sensor problem was caused by condensation, thermal drift, connector instability, or electrical noise, software-only analysis will produce incomplete conclusions. This is why cross-domain intelligence matters. The best autonomous driving data frameworks integrate software logs with component-level evidence from thermal systems, steering systems, and electrical distribution.

Finally, some evaluations confuse compliance messaging with operational readiness. Public claims about miles driven or simulation scale are not enough. Researchers should look for proof of scenario coverage, retraining discipline, validation governance, and hardware-software co-optimization.

How does useful autonomous driving data support commercialization and supplier strategy?

Commercialization depends on repeatable safety, scalable engineering workflows, and manageable costs. Useful autonomous driving data reduces uncertainty across all three. It helps OEMs define realistic operating design domains, helps Tier 1 suppliers align component specifications with software needs, and helps investors or analysts separate technical substance from marketing language.

For suppliers, the implications are highly practical. A steering supplier may use field and validation data to refine redundancy logic and torque response under assisted driving scenarios. A wiring harness supplier may evaluate electromagnetic robustness and bandwidth requirements as sensor suites expand. A thermal systems company may identify how processor heat loads, heat pump strategies, and ambient extremes affect autonomous computing stability. An IVI stakeholder may study how driver prompts and cockpit integration influence safe handover behavior.

This is where strategic intelligence becomes valuable. Useful autonomous driving data does not only train algorithms; it informs sourcing, architecture roadmaps, risk prioritization, and technical barriers. In a highly competitive global supply chain, the organizations that best connect data with component evolution are often the ones that build more defensible positions.

If a company wants to assess autonomous driving data partnerships or solutions, what should it confirm first?

Before choosing a dataset provider, platform partner, or validation collaborator, companies should clarify a few questions. What deployment scenarios matter most? Which failures are most costly or safety-critical? How well does the available autonomous driving data connect to real vehicle subsystems? What percentage of effort goes into collection, labeling, curation, simulation, and revalidation? And can the partner explain how data leads to design changes rather than just dashboards?

It is also wise to confirm whether the solution supports long-term iteration. Intelligent mobility programs evolve quickly, and static datasets age fast as sensors, compute platforms, regulations, and regional applications change. The strongest partners usually offer not only access to autonomous driving data, but also methods for scenario expansion, version control, root-cause traceability, and system-level interpretation.

For information researchers, the core takeaway is simple: useful autonomous driving data is not defined by volume alone. It is defined by quality, context, diversity, accessibility, and relevance to real vehicle behavior. If you need to confirm a specific technical path, sourcing direction, validation cycle, commercialization risk, or cooperation model, start by discussing scenario coverage, subsystem linkage, data governance, and the exact engineering decisions the data is expected to support.

Related News

- 00

0000-00

How Vehicle Reliability Is Changing in the EV Era - 00

0000-00

Automotive Supply Chain Risks That Still Catch Programs Off Guard - 00

0000-00

Smart Mobility Trends Worth Watching Through 2026 - 00

0000-00

Defrosting Algorithms: What Improves Visibility in Real Winter Driving - 00

0000-00

Are High-Voltage Flat-Wire Motors Worth the Design Trade-Offs?

Weekly Insights

Stay ahead with our curated technology reports delivered every Monday.

Recommended News